Sign Language Translator Android Application

Abstract

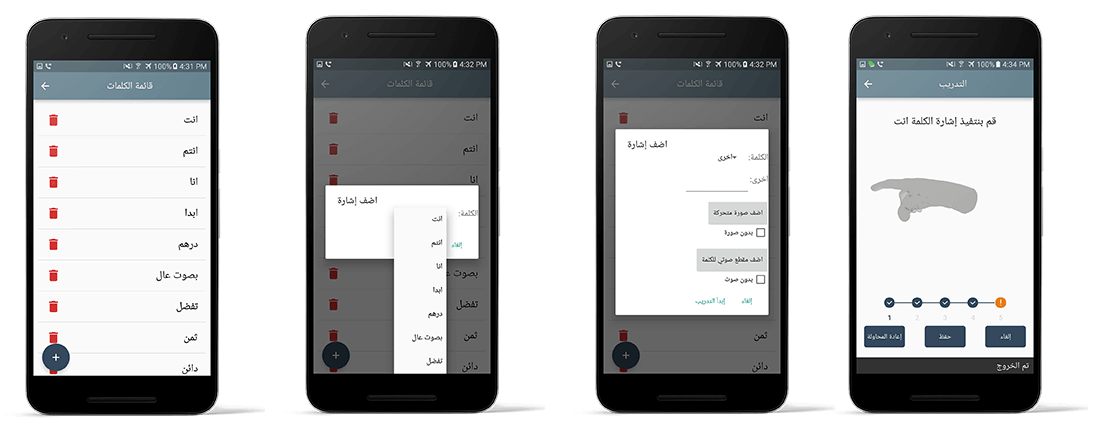

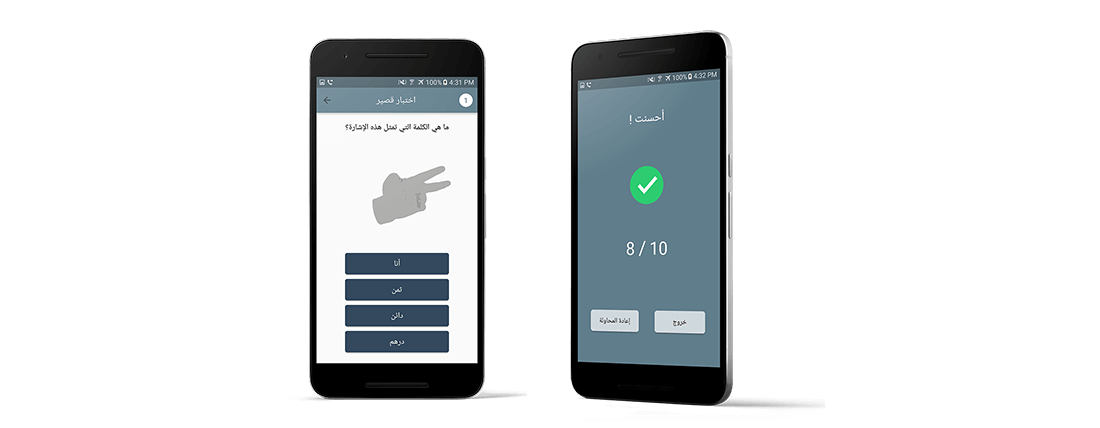

This project introduces a real-time android mobile application for real-time bilateral Arabic sign language translation. The system is designed to operate on isolated sign language words. The mobile application has four main components; sign language capture for training, sign language capture for translation, voice to sign language translation and a sign language quiz game. Therefore, the Mobile App can be used for bilateral communication with the deaf community. Acquisition of sign language words is performed through two different approaches, a sensor-based approach and an image processing approach. For the sensor based approach, a portable Leap Motion Controller is connected to the USB of the mobile phone through an OTG adapter to get the hand movement readings. We used simple feature extraction approaches based on statistical features such as mean, variance and covariance. We also used simple classification approach based on the minimum distance classifier. Classification results performed using 15 different sign language words revealed that excellent classification results are achieved. In comparison to a similar system that uses data gloves, our sensor-based system has the advantage of higher accuracy and the advantage of real-time processing using a mobile application. On the other hand, for the image processing approach we used the phone’s camera to extract hand movement readings. We also performed a simple feature extraction based on statistical features and also performed simple classification using minimum distance classifier. However, the image-processing system did not score excellent classification results as the sensor-based system.